The Assistant SDK is provider-pluggable through anDocumentation Index

Fetch the complete documentation index at: https://docs.metabind.ai/llms.txt

Use this file to discover all available pages before exploring further.

LLMProvider abstraction. Two implementations ship today:

MetabindAgentProvider— calls Metabind’s hosted Agent proxy atagent.metabind.ai. The proxy holds the LLM key, runs the tool loop server-side, and streams responses back as SSE. Recommended for production.AnthropicProvider— bring-your-own-key (BYOK). The SDK calls Anthropic directly from the client. Useful for development, internal tools, or apps where the key reaches the SDK from an authenticated user-managed source.

LLMProvider protocol to plug in something else.

When to pick which

| Mode | Pick when |

|---|---|

Agent proxy (MetabindAgentProvider) | Production. Your binary ships only a Metabind project token; the LLM key stays on the server. The proxy authenticates the project token, calls the LLM, runs the tool loop, and streams results back. |

BYOK direct (AnthropicProvider) | Local development. Internal tools where the key is provisioned per user from a trusted source. You explicitly want a different LLM provider, region, or routing policy than the proxy offers. |

MetabindAssistant, the same MetabindAssistantView, the same conversation state. Only the provider object changes.

Mode 1: Agent proxy (recommended)

The Agent proxy is a Metabind-managed service athttps://agent.metabind.ai. SDKs call POST https://agent.metabind.ai/{orgId}/{projectId}/chat with a Bearer-authenticated streaming request. The proxy authenticates the caller with a Metabind project token, runs the LLM call and the tool loop on the server side, and returns the result as a Server-Sent Events stream.

apiKey field on MetabindAgentProvider is the Metabind project token, not an LLM provider key. The proxy uses it to authenticate the project; the LLM key is held server-side.

What the proxy does

- Authenticates the request with the project token (Bearer header).

- Routes to the LLM provider configured for the project.

- Runs the tool-call loop server-side: when the LLM emits a tool call, the proxy invokes the project’s MCP tool, returns the result to the LLM, and continues until the LLM produces a final answer.

- Streams events back to the client over SSE —

message_start(includesconversationId),text_delta,tool_use,tool_result,provider_switch,message_stop.

Server-side provider selection

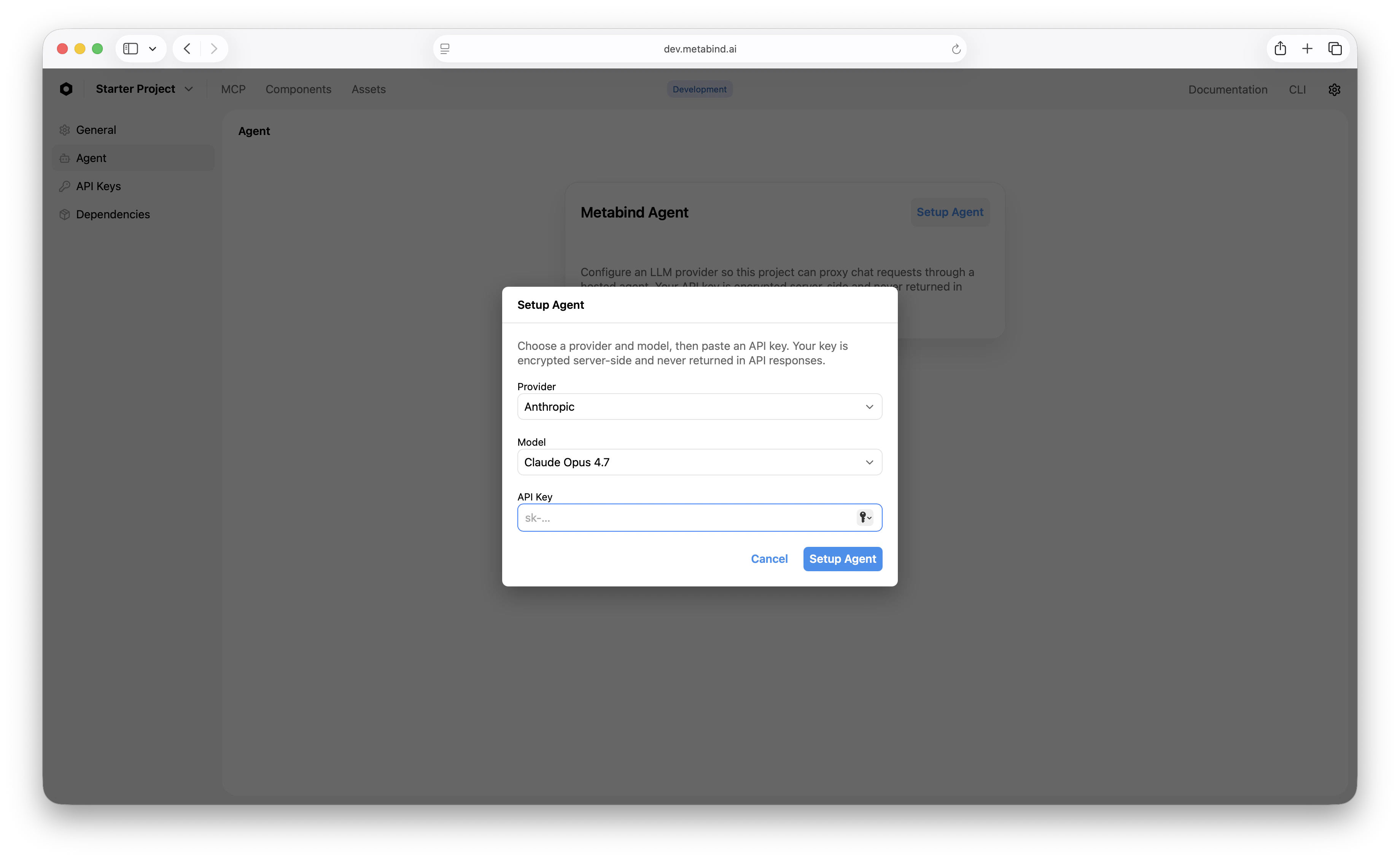

The proxy is multi-provider. Each project picks one of the supported providers in MCP App Studio: Anthropic, OpenAI, or Google. The provider, model, and key are all configured server-side; the client just sees an SSE stream. To switch providers for an entire project, change the setting in MCP App Studio — no client release needed.

Conversation IDs

The proxy stores conversation history server-side. The first event of every response (message_start) includes a conversationId. To continue the same conversation on a later turn (after an app restart, or across devices), echo that conversationId back on the next chat request and the proxy merges history against its stored record.

The default chat surface (MetabindAssistantView) handles this for you. If you build a custom UI on top of assistant.send(...), persist the conversation ID alongside whatever else you’re storing.

Mode 2: BYOK direct (Anthropic)

BYOK direct mode bypasses the Agent proxy entirely. The client calls Anthropic with a key you provide and runs the tool loop locally. Use this for development, internal tools, or apps where the key reaches the SDK from a trusted source.Custom providers

Conform to the publicLLMProvider protocol if you need to integrate something else — an internal LLM endpoint, a fine-tuned model, a different vendor. The protocol is small: a streaming method that takes messages and tool definitions and emits chunks in the SDK’s chunk format.

Keys at a glance

| Key | Where it lives | What it gates |

|---|---|---|

| Metabind project token | Your backend (mint per user / per session). Reaches the SDK via your auth flow. | Listing and calling tools on your project; authenticating to the Agent proxy. |

| LLM provider API key | Agent proxy mode: server-side, never in the client. BYOK direct mode: delivered to the SDK by your auth flow, ideally short-lived. | Conversational generation. |

apiKey accessor is callable so you can refresh on demand without rebuilding the assistant.

Choosing a model

The Agent proxy decides the model server-side, configured per project in MCP App Studio. For BYOK, pass the model string explicitly toAnthropicProvider. A few rough heuristics for either mode:

- Default to mid-tier. Sonnet 4.6 is well-priced and capable enough for most assistant workloads.

- Scale up for hard reasoning. When tool selection requires multi-step thinking or your tool set is large (50+ tools), upgrade to Opus 4.7.

- Scale down for simple flows. If your assistant calls one of three tools and replies in a sentence, a smaller model saves cost without quality loss.

Per-user metering

Once requests go through the Agent proxy, Metabind’s usage tracking captures tokens per project token. If you mint per-user project tokens, the audit trail naturally segments by user. For BYOK direct mode, you’re metering against your own LLM key — use the provider’s dashboard or a backend proxy you control.Troubleshooting

| Symptom | Likely cause |

|---|---|

| All requests 401 | Project token expired or the proxy can’t validate it; refresh logic broken. |

| SSE stream closes immediately | Proxy rejected the request — check that the project token has access to the MCP project. |

| Tool selection feels wrong | Sharpen tool descriptions in MCP App Studio, or upgrade to a more capable model. |

| BYOK Anthropic 401 | Key invalid or expired; check the provider’s dashboard. |

Related

iOS SDK

Where the LLM provider plugs in for iOS.

Android SDK

Where the LLM provider plugs in for Android.

Assistant SDK overview

What the Assistant SDK is and which surface to pick.

Custom host UI

Replace the default chat UI with your own.